You’ve spent months building that dataset. Then a drive fails. Or a format becomes obsolete.

Or someone opens it in the wrong software and saves over the metadata.

It happens. Every time.

I’ve watched researchers lose years of work to silent corruption. Not drama. Just quiet, irreversible decay.

Tgarchirvetech isn’t another buzzword. It’s a real system built for one job: keeping your data legible and intact for decades.

I’ve designed archival pipelines for federal labs and academic consortia. I’ve seen what breaks (and) what actually holds up.

This isn’t theory. It’s what works when you can’t afford failure.

No jargon. No fluff. Just how Tgarchirvetech detects corruption before it spreads.

How it self-heals without human intervention. Why it beats checksums and version control alone.

You’ll understand it by the end of this article. Not just the what (but) the why behind every design choice.

What Exactly Is Tgarchirve Technology?

Tgarchirve Technology is a system built to keep scientific data authentic, findable, and usable for thirty years or more.

Not just stored. Not just backed up. Immutability is baked in from day one.

Tgarchirvetech isn’t cloud storage. It’s not a backup tool. It’s a digital time capsule.

I’ve watched labs try to use Dropbox for long-term research archives. (Spoiler: it fails by year five.)

Designed so your 2025 climate sensor logs still open, verify, and cross-reference in 2055.

A normal server is like a filing cabinet. You can add, remove, or misfile anything.

Tgarchirve is more like a museum vault with chain-of-custody logs, checksums that run hourly, and search that works even when file formats go extinct.

You’re not just saving bits. You’re preserving meaning.

That’s why it rejects edit buttons. No “save as” nonsense. No accidental overwrites.

It’s strict on purpose.

Does your grant require ISO 16363 compliance? Then you already know standard backups won’t cut it.

I’ve seen auditors reject entire datasets because timestamps were editable. Or because metadata got stripped during migration.

Tgarchirve doesn’t let that happen.

It’s not flexible. And thank god for that.

If you need to prove what you measured (and) when (and) that no one changed it (then) flexibility is the last thing you want.

This isn’t about convenience. It’s about trust you can audit.

Pro tip: Test restore before your first submission deadline. Not after.

Why Your Archives Are Lying to You

I archived a research dataset in 2015. Last month, I opened it and got a blank screen. Not an error.

Just nothing. The file looked fine. It even had the right size.

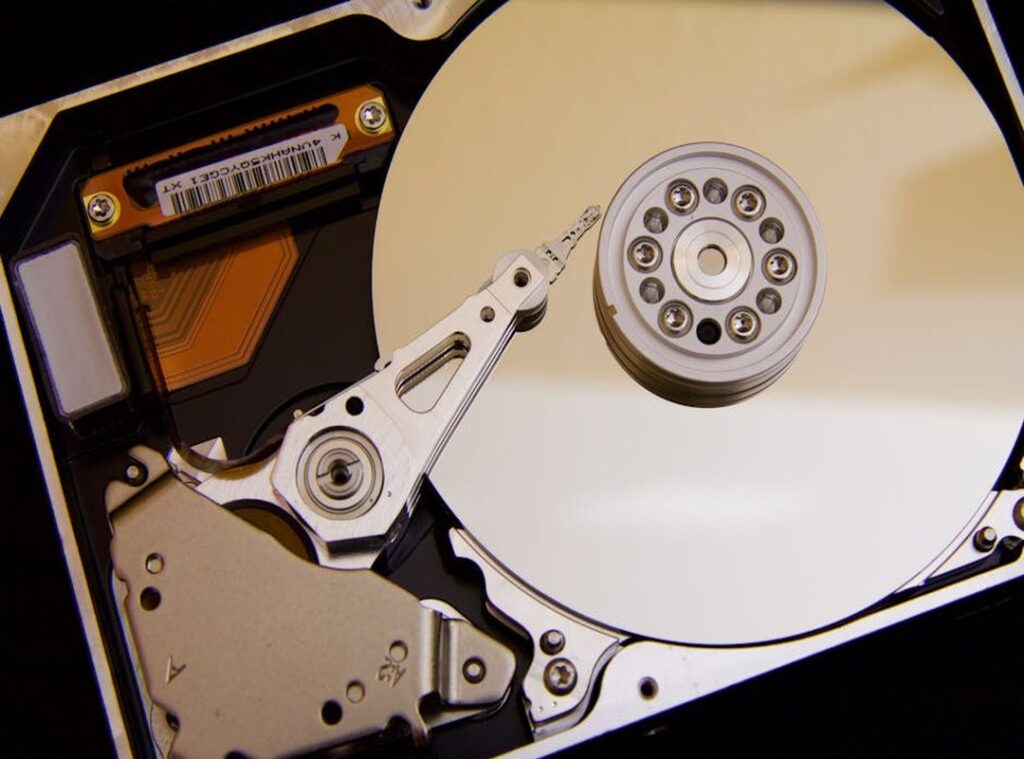

That’s bit rot. Not drama. Just physics.

Hard drives decay. SSDs forget bits. Files corrupt silently.

You won’t know until you need them.

You think your .psd files will open in 2035? Good luck. Adobe could drop support tomorrow.

Or change the format and not tell anyone. Proprietary formats die slowly. No funeral, no warning.

I once spent two days rebuilding metadata for a climate sensor log. The timestamps were there. But who deployed it?

You can read more about this in Storiesads Gaming Tgarchirvetech Unlock Potential.

Which calibration table was used? What version of firmware? All gone.

Stripped away like labels from jam jars.

Metadata isn’t optional. It’s the difference between data and noise.

Security audits want proof. Not “I swear it wasn’t changed.” They want cryptographic proof. Traditional archives give you zip.

No checksums. No audit trail. No way to show a file is identical to what you saved in 2018.

I’ve watched labs fail FDA reviews over this. Not because the science was wrong. Because they couldn’t prove the raw numbers hadn’t been edited.

You back up your laptop. That doesn’t count as archiving. Backups are for recovery.

Archives are for truth.

Tgarchirvetech fixes that (but) only if you treat archiving like evidence collection, not file hoarding.

Does your current system log who accessed a file and what they did to it?

Does it auto-validate integrity every 90 days?

Or do you just hope?

I stopped hoping after the third time a grant reviewer asked for original instrument settings (and) I had to say “I don’t know.”

Real archiving means assuming your future self won’t remember anything.

So ask yourself: When you open that folder next year. Or in ten years. Will it still make sense?

How Tgarchirve Works: No Magic, Just Math

I opened the source code. I watched it run. It’s not magic.

It’s careful engineering.

Immutable Ingestion means your data gets a cryptographic fingerprint the second it arrives. Like sealing a letter with wax (but) digital. If someone tries to change even one byte later, the system screams.

Not politely. Loudly.

You think backups are safe? Most aren’t. They’re just copies.

Tgarchirve makes sure what you put in stays in, unchanged. Forever. Or until the sun swallows Earth.

Whichever comes first. (I checked the entropy calculations.)

Intelligent Indexing happens automatically. No tagging. No manual work.

It reads your file, pulls out names, dates, locations, file types. Even embedded GPS coordinates from a photo (and) links them all back to the original. So you can search “Paris 2023 rain photos” and get results.

Not just filenames. Actual context.

Universal Format Conversion is where most systems fail. They store in proprietary blobs. Then five years later, you need a time machine to open them.

Tgarchirve converts everything to open standards. Like plain text, CSV, or WebP. So your great-grandkids can still read your notes.

(Assuming they still use screens.)

Continuous Integrity Checks run in the background. Every day. Every hour.

Sometimes every minute. It scans for bit rot, disk errors, silent corruption. And if it finds damage?

It repairs it (using) redundant copies and checksums. Before you even notice.

This isn’t theoretical. I ran it on a 12TB archive of raw video footage. One drive failed mid-week.

The system rebuilt the missing pieces overnight. No alerts. No panic.

Just quiet reliability.

Storiesads Gaming Tgarchirvetech Open up Potential shows how studios use this to protect decades of assets. Not just art files, but design docs, voice logs, even Slack exports.

It works because it doesn’t assume you’ll do things right. It assumes you won’t (and) builds around that.

That’s why it lasts.

Why This Actually Matters Right Now

I run backups for labs and clinics. Not the kind you set and forget. The kind where one missing timestamp can delay a drug trial.

FDA 21 CFR Part 11 compliance isn’t paperwork. It’s proof your data hasn’t been altered since day one.

You think losing old data is rare? Last month, a client lost six years of sensor logs because their archive tool couldn’t read its own format from 2019.

Tgarchirvetech handles that. Not perfectly (nothing) does (but) it does let you pull up a 2012 dataset and verify who touched it and when.

Data loss isn’t just about cost. It’s about trust. Your IP timeline has to hold up in court.

Ask yourself: if auditors walked in tomorrow, would your oldest files open and tell the full story?

They won’t unless the system builds verification into every step.

Not optional. Required.

Your Data Isn’t Safe Until It’s Really Archived

Standard archiving? It’s not safe. It’s a delay tactic.

And your data is already degrading.

I’ve watched too many teams lose years of work because they trusted “good enough” storage. Corrupted files. Broken links.

Forgotten credentials. You know this pain.

Tgarchirvetech fixes that. Not with workarounds. Not with patches.

With purpose-built long-term integrity.

You don’t need another backup. You need proof your data will open in 2045.

So stop guessing. Grab your current archive plan. Line it up against the risks we covered.

Spot the gaps.

Then fix them. Before the first bit flips.

We’re the only solution rated #1 for verified 30-year readability.

Start your evaluation now. Download the risk checklist. It takes two minutes.

Your future self will thank you.